If you’ve been curious or even sceptical about the whole concept of scoring applicants with AI, you should know that you’re not the only one. AI adoption has grown from an emerging trend in 2024 to an embedded workflow tool that over 76% of talent teams use every day. And most teams are still trying to figure out how to safely adopt it.

In a previous article, we reviewed the top candidate assessment software with AI scoring, focusing on the techniques and tradeoffs for each tool. Here, we explain the exact mechanism of how AI candidate screening works, what most people get wrong about it, and how to adopt this new technology with confidence.

What is AI candidate scoring? How does it work?

AI candidate scoring is the use of AI technologies, such as machine learning and large language models, to evaluate a job applicant’s performance. It is one of the various stages of an AI-powered candidate screening process, typically involving three steps:

- Input: You give an AI system an input, such as a candidate’s one-way video interview

- Processing: The AI system analyses the input against predefined criteria

- Output: You receive a result (e.g., candidate ranking, numeric score, qualitative summary, etc) that informs a hiring decision.

Through this process, talent teams are able to automate parts of the hiring process and cut time-to-hire by up to 50%. But that depends on the kind of AI candidate scoring system they choose.

Think about this: if you're hiring for a customer-facing role and need to screen 2,000 applicants, is it better to rank candidates by resume match score, or to understand how they communicate before you've spoken to a single one of them?

Your answer determines not just the type of AI candidate scoring tool you choose, but also the techniques. While the three steps above give you a working definition, there's a little more to know about the types, models, and techniques involved in AI candidate scoring.

The types, models and techniques involved in AI candidate scoring

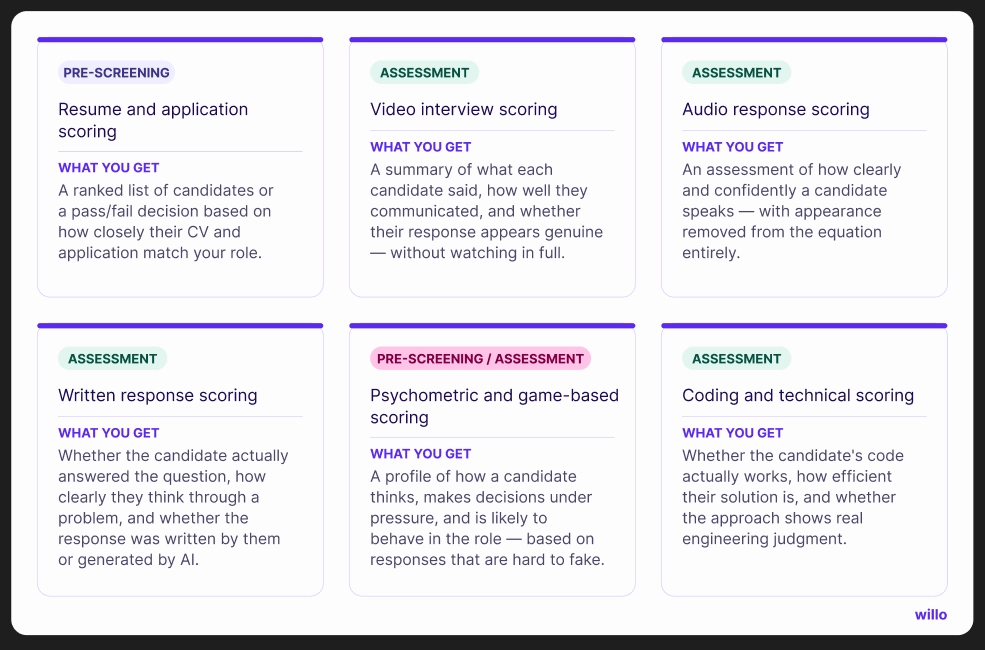

When you search for AI candidate scoring tools, you’d find options like resume scoring, video interview scoring, and game-based assessment scoring. These are the types of AI candidate scoring tools, and they're a useful starting point.

But the form alone won't tell you whether a tool is right for you. Two video scoring tools can look identical and produce entirely different hiring signals, because what determines the output isn't the form, it's what's happening underneath it.

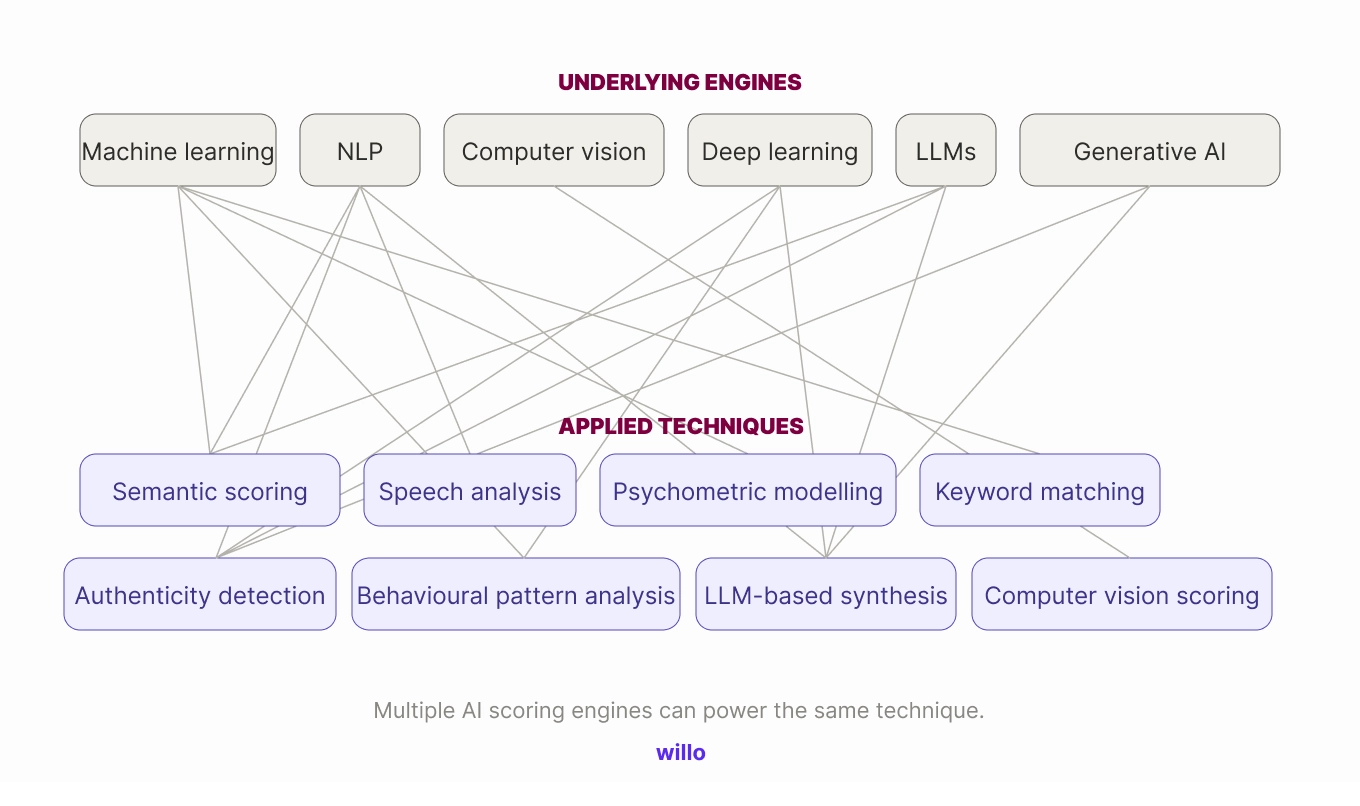

Underneath every AI candidate scoring tool are models like machine learning, large language models, and NLP. These are the engines that make automated evaluation possible. And each model can generate different candidate scores depending on the techniques.

Think of techniques as what those models actually do to candidate data. An LLM reading a resume produces a relevance summary. The same LLM reading a video transcript produces a qualitative assessment of how a candidate communicated. Same engine, different technique, different input, completely different hiring signal.

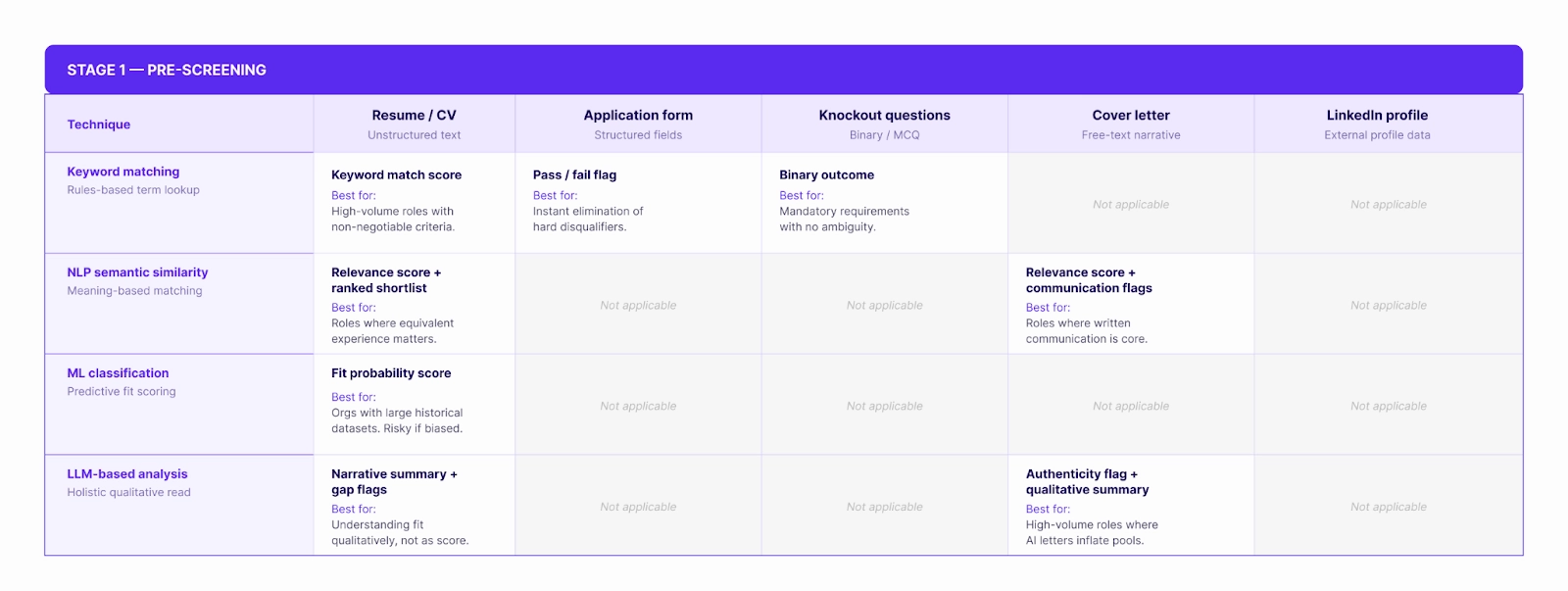

So if you're trying to identify the strongest candidates from 5,000 applications at the pre-screening stage, for example, you don't just need an AI resume scoring tool, you need one that uses semantic similarity scoring in addition to keyword matching, so you're surfacing genuine fit rather than candidates who know how to mirror a job description. Get that combination wrong, and you'll optimise for the wrong signal without realising it.

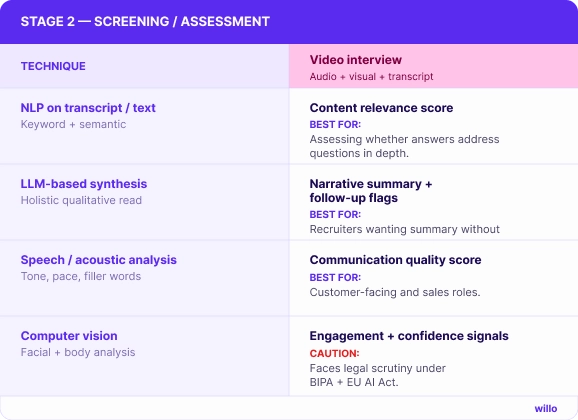

The matrix below maps every major technique against the input types and assessment stages where they apply — and what each combination actually produces.

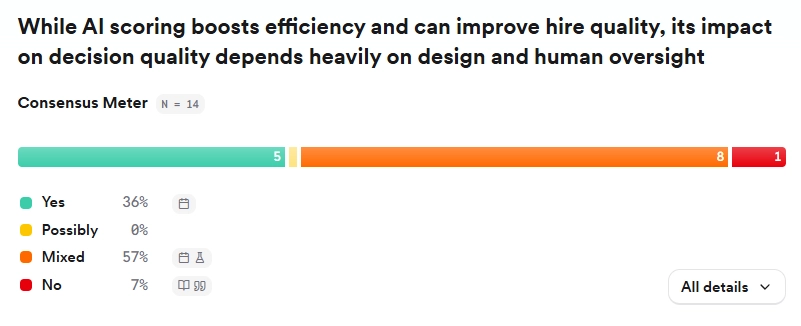

Does AI candidate scoring actually improve hiring decisions?

AI candidate scoring can improve hiring decisions when used with human oversight. A review of 14 peer-reviewed studies on AI scoring in hiring finds that the technology generally improves efficiency and often enhances match quality, but better decisions are not guaranteed.

The impact on decision quality depends heavily on how the system is designed and how much human judgment remains in the process.

4 ways AI scoring helps hiring teams

- It increases the speed of prioritisation: Research consistently shows that AI handling screening and scheduling reduces time-to-hire by between 28 and 47 per cent across organisations. This matters for candidate experience as much as recruiter efficiency. Candidates waiting weeks for any signal from an employer have a poor experience, regardless of the eventual outcome. AI that quickly identifies a top tier of candidates to engage first can meaningfully improve responsiveness.

- It helps drive consistency at scale: Human reviewers get tired, get influenced by the order in which they read CVs, and apply criteria unevenly across a large applicant pool. AI applies the same standards to application number one and application number five hundred. For high-volume roles where manual review of every application is genuinely impractical, that consistency has real value.

- It can reduce certain kinds of bias in early screening. When scoring criteria are explicitly defined and limited to job-relevant factors — skills, experience, qualifications — AI can surface candidates who might have been overlooked by a human reviewer applying unconscious preferences.

- It can improve overall hiring quality: Match quality improves too, at least in controlled conditions. Some studies report candidate-job match accuracy improving from around 78 per cent to over 90 per cent when AI scoring is applied, a substantial gain over manual screening at scale.

These benefits are real but conditional. They depend on well-defined criteria, clean data, and — critically — a human being involved in the actual decision.

Where AI scoring often goes wrong

- It can reinforce hiring bias at a scale larger than humans’: AI systems learn from historical hiring data, and if that data reflects past discrimination, the system quietly replicates it. The bias is structural, invisible, and self-reinforcing. A 2024 University of Washington study found that the kind of AI model underlying many resume screening tools favoured white-associated names in 85.1% of cases.

- Candidates can manipulate certain AI candidate scoring systems: Once candidates understand how an AI scoring system evaluates them, which keywords it favours, which response patterns it rewards, they optimise for those signals rather than demonstrating genuine fit. The system starts selecting candidates who are good at being evaluated by AI, which is not the same as candidates who are good at the job.

- It is bad at making decisions, and shouldn’t be used to: AI struggles with nuanced soft skills and contextual judgment. When scoring moves from informing a decision to making one, acting without human review, the judgment required to catch edge cases disappears. Most AI systems might not recognise a non-linear career path that a recruiter would have recognised. This makes it difficult for a candidate with an unusual background, who would have thrived in the role, to reach the hiring manager’s desk.

How to choose the right AI candidate scoring tool for your team

To choose the right AI candidate scoring tool for your team, you need to ask the following key questions:

- Where in your hiring process do you need scoring — and what candidate data do you have there?

- What do you actually need to know about the candidate at that stage?

- Does the output inform your decision or make it for you?

- Can you explain the output to a candidate if challenged?

Most hiring teams approach AI candidate scoring tools by asking about volume — how many candidates can it screen at once? Then integration — does it connect with our ATS? Then pricing.

These are not bad questions. They are just the right questions asked at the wrong time. In most cases, the answers would lead you to the most capable-sounding tool rather than the most appropriate one.

The questions that should come first are these.

Where in your hiring process do you need AI scoring — and what input do you have there?

Before evaluating any tool, map the specific stage you're trying to improve and identify what candidate data you're actually collecting there. If you're collecting resumes and application form responses, you need a tool optimised for text-based inputs. If you're collecting recorded video responses, you need a tool built to handle audio, visual, and transcript data.

This is the starting point because it determines everything downstream. A tool built for resume triage at the top of the funnel is a fundamentally different product from one built for video assessment mid-funnel, even if both carry the AI candidate scoring label. Buying a video scoring tool when your bottleneck is resume triage — or vice versa — means you're solving the wrong problem regardless of how good the tool is.

What do you actually need to know about the candidate at that stage?

This is where understanding AI scoring techniques comes in. If you need to know whether a candidate meets basic role requirements, keyword matching or rules-based filtering is sufficient. In a case where you need to understand how a candidate thinks and communicates, a system that can analyse video transcripts or written responses is more appropriate.

Choosing the right system for the job doesn’t just improve hiring decisions; you can also save more costs. Given the fact that almost every “automated” hiring product carries an AI label today, it’s easy to confuse what you actually need with what the market sells.

For instance, if you need to know whether a candidate meets a set of basic, non-negotiable requirements like location and right to work, a simple rules-based filtering system or a four-minute Zapier-to-ATS configuration will do that job. Except where a mix of scoring scenarios is needed, buying a sophisticated AI scoring tool for a problem that a checkbox can solve is an unnecessary expense.

Does the output inform your decision or make it for you?

The mindset here is not that "AI should never decide anything." Rather, AI scoring tools should not auto-reject, auto-advance, or act on candidates' performance without a human reviewing— especially when the score is based on subjective non-binary inputs.

If a candidate answers "No" to "Do you have the right to work in this country?" Auto-rejecting that candidate is a great use of automation for a binary, non-negotiable criterion. There is no human judgment required.

The opposite is true when an algorithm auto-rejects a candidate because their resume scores 61 out of 100, without a human ever seeing it. This process introduces quality and legal risk that the efficiency gain rarely justifies.

If you need an automated ranking but still need control over who gets into the final shortlist, you should ask specifically: at what point in the output does a human re-enter the process? The answer should tell you everything you need to know about the tool’s suitability.

Can you explain the output to a candidate if challenged?

Explainability is a big part of AI candidate screening adoption. As AI hiring legislation expands, through frameworks like the EU AI Act, New York City's Local Law 144, and Illinois' AI Video Interview Act, the ability to explain and audit automated hiring decisions is moving from best practice to legal requirement.

If a candidate asks why they were screened out, your tool should be able to tell you what it evaluated, how it reached its conclusion, and what criteria it applied. Auditability is not a nice-to-have feature. It is the difference between a defensible process and a liability.

Once you have clear answers to these four questions, volume, integration, and pricing become straightforward filters rather than primary decision drivers. At this point, you're looking for the tool that matches your stage, your input, your signal needs, and keeps a human accountable for the outcome. Not just the most impressive tool.

7 Expert Tips For Safe, Effective, & Defensible Ai Candidate Scoring

- Define what a “good” use of automated scoring means for your team before buying any tool

- Configure your candidate scoring system to prove capabilities, not just credentials

- Build in deliberate flexibility for the candidates who don't fit conventional patterns

- Treat AI scores as signals, not the final decision

- Tell candidates that AI scoring is part of your process and help them prepare

- Consistently audit your scoring outputs for bias

- Use purpose-built recruitment AI, not generic LLMs

1. Define what a “good” use of automated scoring means for your team before buying any tool

Before configuring any scoring system, answer these questions clearly: What does success look like in this role at six months? What behaviours or demonstrated capabilities predict that success? What are the genuine must-haves versus the nice-to-haves you've been treating as requirements?

As Willo's CEO, Euan Cameron has put it: "Even the smartest tech won't deliver results unless you're clear on what great looks like."

The tool operationalises whatever definition of quality you give it. And if that definition is vague, outdated, or borrowed from an old job description, you'll get fast results that consistently point you in the wrong direction.

2. Score for demonstrated capability, not credentials

The most trusted signal in hiring, according to the 2026 Hiring Trends Report, is behavioural interviewing with real examples, cited by 67.7% of hiring professionals. In other words, hiring teams trust what candidates show, not what they list. Your AI scoring framework should reflect the same hierarchy.

A scoring system that weights years of experience or educational pedigree over demonstrated reasoning and communication will systematically undervalue candidates whose capability came through non-traditional paths.

Justin Krucki, Global Talent Acquisition Manager at Miovision, put it plainly on the Looks Good on Paper podcast:

"It's not about the number of years of experience to me. It's about what you've accomplished. I can't count how many candidates I've come across where they have maybe a little bit less years of experience than an ideal candidate, but what they've accomplished is so impressive that it equates to somebody who's maybe spent eight or nine years in a role."

Build scoring criteria around outcomes and demonstrated behaviours. Let the credential signals be secondary context, not primary filters.

3. Build in deliberate flexibility for the candidates who don't fit the pattern

Don't score exclusively within a tight percentile band and ignore everything outside it. Instead, set a threshold that handles volume, then keep human review active for candidates who fall just below it. That way, your scoring system ensures that even candidates whose career paths are non-linear, or whose most relevant experience didn't come from a traditional employer, get a chance.

Teams using Willo Intelligence approach score this way. They use AI to surface and summarise signals, then trust recruiters to make the call on edge cases that a score alone can't resolve.

4. Use AI scores as signals, not decisions

Use scoring to manage volume, surface relevant candidates faster, and bring consistency to early-stage filtering. Reserve the final shortlist, the offer decision, the assessment of cultural fit and potential for people.

This is the practice that most directly separates responsible adoption from risky automation. 78.7% of hiring professionals we’ve talked to said final hiring decisions must stay human-led. Not a single respondent believed automation could effectively handle all hiring stages. That consensus is strong and consistent across company sizes, industries, and geographies.

5. Tell candidates that AI scoring is part of your process and help them prepare

This practice costs nothing and protects almost everything. Candidates who know they're being scored by AI can prepare accordingly, respond more authentically, and engage with the process on honest terms.

The damage from undisclosed AI scoring isn't abstract. Julia Fulton, Head of Talent at Float, described the broader principle:

"The level of clarity and transparency is something we try to do. It lasts longer than the interaction."

Candidates who have a good experience with your process — even if they don't get the role — become advocates. Candidates who feel deceived become detractors.

Yet, practical disclosure doesn't require a legal disclaimer at the bottom of an application form. It means telling candidates at the start of the process which stages involve AI-assisted evaluation, what is being assessed, and what role a human plays in the decision. That information changes how candidates show up and positively influences how your employer brand is perceived in the market.

6. Audit your scoring outputs for bias, and do it more than once

Fairness in AI scoring is an ongoing practice. Surprisingly, only 22.6% of hiring teams actively track fairness outcomes across their process. That means a significant majority of teams deploying AI scoring tools have no systematic way of knowing whether those tools are producing equitable results.

Reviewing scoring output distributions across candidate demographics is a great way to practice fairness audit in AI scoring. If candidates from certain backgrounds are consistently scoring lower without a role-relevant reason, that's a signal your criteria might need revision.

7. Use purpose-built recruitment AI, not generic LLMs

Off-the-shelf models like ChatGPT excel at general tasks but lack the hiring-specific safeguards needed for fair evaluation. Purpose-built tools are trained on real hiring data with bias-aware design, giving you more reliable insights without constant manual corrections. They understand interview context, recognise transferable skills, and avoid common pitfalls like over-penalising accents or non-linear phrasing.

Is AI Candidate Scoring Good Or Bad For Recruitment?

AI candidate scoring is not inherently good or bad for hiring. It is a capability. And like any capability, what it produces depends entirely on how you use it.

Used well, it gives recruiting teams the ability to apply consistent, defined criteria across hundreds or thousands of candidates without the fatigue, inconsistency, and unconscious bias that manual review accumulates at scale.

Used poorly: without clarity on what you're trying to learn, without the right technique for your input, without a human accountable for the final decision, it produces fast results that point you in the wrong direction. It selects for candidates who are good at being evaluated by AI. It encodes the patterns of whoever got hired before. It removes the judgment that catches what algorithms miss.

The difference between those two outcomes is not which tool you buy. It is whether you understand what you need before you evaluate anything, and whether you keep human judgment in the loop after the AI has done its work. That is the standard worth holding any AI candidate scoring system to — including your own process once you adopt one.